|

Human

Computer Interaction

(CS408)

VU

Lecture

27

Lecture

27. Behavior

& Form Part II

Learning

Goals

As the

aim of this lecture is to

introduce you the study of

Human Computer

Interaction,

so that after studying this

you will be able to:

· Understand

the narratives and

scenarios

· Define

requirements using persona-based

design

Postures for the

Web

27.1

Designers

may be tempted to think that

the Web is, by necessity,

different from

desktop

applications in terms of posture.

Although there are variations

that exhibit

combinations

of these postures, the basic four

stances really do cover the

needs of

most Web

sites and Web.

Information-oriented

sites

Sites

that are purely

informational, which require no

complex transactions to take

place

beyond navigating from page to page

and limited search, must

balance two

forces:

the need to display a reasonable density

of useful information, and

the need to

allow

first time and infrequent

users to easily learn and

navigate the site. This

implies

a tension

between sovereign and

transient attributes in informational

sites. Which

stance is

more dominant depends largely on

who the target personas

are and what

their

behavior patterns are when

using the site: Are

they infrequent or one-time

users,

or are

they repeat users who will

return weekly or daily to

view content?

The

frequency at which content

can be updated on a site

does, in some

respects,

influence

this behavior: Informational

sites with daily-updated

information will

naturally

attract repeat users more

than a monthly-updated site.

Infrequently updated

sites

may be used more as

occasional reference (assuming the

information is not

too

topical)

rather than heavy repeat

use and should then be

given more of a

transient

stance

than a sovereign one. What's

more, the site can

configure itself into a

more

sovereign

posture by paying attention to

how often that particular

user visits.

SOVEREIGN

ATTRIBUTES

Detailed

information display is best

accomplished by assuming a sovereign

stance. By

assuming

full-screen

use, designers can take

advantage of all the

possible space available

to

clearly

present both the information

itself and the navigational

tools and cues to

keep

users

oriented.

The

only fly in the ointment of

sovereign stance on the Web

is choosing which

full-

screen

resolution is appropriate. In fact,

this is an issue for desktop

applications as

well.

The only difference between

the desktop and the

Web in this regard is that

Web

sites

have little leverage in

influencing what screen

resolution users will have.

Users,

however,

who are spending money on

expensive desktop productivity

applications,

244

Human

Computer Interaction

(CS408)

VU

will

probably make sure that

they have the right

hardware to support the

needs of the

software.

Thus, Web designers need to make a

decision early on what the

lowest

common

denominator they wish to

support will be. Alternatively,

they must code

more

complex sites that may be

optimal on higher resolution

screens, but which

are

still

usable (without horizontal scrolling) on

lower-resolution monitors.

TRANSIENT

ATTRIBUTES

The

less frequently your primary

personas access the site,

the more transient a

stance

the

site needs to take. In an

informational site, this

manifests itself in terms of

ease

and

clarity of navigation.

Sites

used for infrequent

reference might be bookmarked by

users: You should

make

it

possible for them to

bookmark any page of information so

that they can

reliably

return to

it at any later time.

Users will

likely visit sites with

weekly to monthly updated

material intermittently,

and so

navigation there must be particularly

clear. If the site can

retain information

about

past user actions via

cookies or server-side methods and

present information

that is

organized based on what

interested them previously,

this could

dramatically

help

less frequent users find

what they need with minimal

navigation.

Transactional

sites and Web

applications

Transactional

Web sites and Web

applications have many of

the same tensions

between

sovereign and transient

stances that informational

sites do. This is a

particular

challenge because the level

of interaction can be significantly

more

complex.

Again, a

good guide is the goals

and needs of the primary

personas: Are they

consumers,

who will use the site at

their discretion, perhaps on a weekly or

monthly

basis, or

are they employees who (for

an enterprise or B2B Web

application) must use

the

site as part of their job on

a daily basis? Transactional

sites that are used

for a

significant

part of an employee's job

should be considered full

sovereign applications.

On the

other hand, e-commerce, online

banking, and other

consumer-oriented

transactional

sites must, like informational

sites, balance between

sovereign and

transient

stances very similarly to

informational sites. In fact,

many consumer

transactional

sites have a heavy

informational aspect because

users like to

research

and

compare products, investments, and

other items to be transacted upon.

For these

types of

sites, navigational clarity is

very important, as is access to

supporting

information

and the streamlining of

transactions. Amazon.com has

addressed many of

these

issues quite well, via

one-click ordering, good

search and browsing

capability,

online

reviews of items, recommendation

lists, persistent shopping

cart, and tracking

of

recently viewed items. If

Amazon has a fault, it may

be that it tries to do a bit

too

much:

Some of the navigational

links near the bottom of the

pages likely don't get

hit

very

often.

Web

portals

27.2

Early

search engines allowed

people to find and access

content and functions

distributed

throughout the world on the

Web. They served as portals in

the original

sense of

the word -- ways to get

somewhere else. Nothing really happens in

these

navigational

portals; you get in,

you go somewhere, you get

out. They are

used

exclusively

to gain access quickly to

unrelated information and

functions.

245

Human

Computer Interaction

(CS408)

VU

If the

user requires access via a

navigational portal relatively

infrequently, the

appropriate

posture is transient, providing

clear, simple navigational

controls and

getting

out of the way. If the

user needs more frequent

access, the appropriate

posture

is

auxiliary: a small and

persistent panel of links

(like the Windows

taskbar).

As portal

sites evolved, they offered

more integrated content and

function and grew

beyond

being simple stepping-stones to

another place. Consumer-oriented

portals

provide

unified access to content

and functionality related to a

specific topic, and

enterprise

portals provide internal

access to important company

information and

business

tools. In both cases, the

intent is essentially to create an

environment in

which

users can access a

particular kind of information

and accomplish a

particular

kind of

work: environmental portals.

Actual work is done in an

environmental portal.

Information

is gathered from disparate

sources and acted upon;

various tools are

brought

together to accomplish a unified

purpose.

An

environmental portal's elements need to

relate to one another in a

way that helps

users

achieve a specific purpose. When a

portal creates a working

environment, it also

creates a

sense of place; the portal

is no longer a way to get somewhere else,

but a

destination

in and of itself. The

appropriate posture for an environmental

portal is thus

sovereign.

Within an

environmental portal, the

individual elements function essentially

as small

applications

running simultaneously -- as such,

the elements themselves also

have

postures:

· Auxiliary

elements: Most of

the elements in an environmental portal

have an

auxiliary

posture; they typically present

aggregated sets of information

to

which

the user wants constant access

(such as dynamic status

monitors), or

simple

functionality (small applications,

link lists, and so on).

Auxiliary

elements are, in

fact, the key building

block of environmental portals.

The

sovereign

portal is, therefore, composed of a

set of auxiliary posture

mini-

applications.

· Transient

elements: In

addition to auxiliary elements, an

environmental

portal

often provides transient

portal services as well. Their

complexity is

minimal;

they are rich in explanatory

elements, and they are

used for short

periods

on demand. Designers should give a

transient posture to

any

embedded

portal service that is

briefly and temporarily

accessed (such as a to-

do list

or package-tracking status display) so

that it does not compete

with the

sovereign/auxiliary

posture of the portal

itself. Rather it becomes a

natural,

temporary

extension of it.

When

migrating traditional applications

into environmental portals,

one of the key

design

challenges is breaking the

application apart into a

proper set of portal

services

with

auxiliary or transient posture.

Sovereign, full-browser applications are

not

appropriate

within portals because they

are not perceived as part of

the portal once

launched.

Postures for

Other Platforms

27.3

Handheld

devices, kiosks, and

software-enabled appliances each

have slightly

different

posture issues. Like Web

interfaces, these other

platforms typically express

a

tension

between several postures.

246

Human

Computer Interaction

(CS408)

VU

Kiosks

The

large, full-screen nature of

kiosks would appear to bias them

towards sovereign

posture,

but there are several

reasons why the situation is

not quite that simple.

First,

users of

kiosks are often first-time

users (with the exception,

perhaps, of ATM users),

and

are in most cases not daily

users. Second, most people do not

spend any

significant

amount of time in front of a

kiosk: They perform a simple

transaction or

search,

get what information they

need, and then move on.

Third, most kiosks

employ

either

touchscreens or bezel buttons to

the side of the display, and

neither of these

input

mechanisms support the high

data density you would

expect of a sovereign

application.

Fourth, kiosk users are

rarely comfortably seated in

front of an optimally

placed

monitor, but are standing in

a public place with bright

ambient light and

many

distractions.

These user behaviors and

constraints should bias most kiosks

towards

transient

posture, with simple

navigation, large controls,

and rich visuals to

attract

attention

and hint at function.

Educational

and entertainment kiosks

vary somewhat from the

strict transient

posture

required

of more transactional kiosks. In

this case, exploration of

the kiosk

environment

is more important than the

simple completion of single

transactions or

searches.

In this case, more data

density and more complex

interactions and

visual

transitions

can sometimes be introduced to

positive effect, but the

limitations of the

input

mechanisms need to be carefully respected,

lest the user lose

the ability to

successfully

navigate the

interface.

Handheld

devices

Designing

for handheld devices is an exercise in

hardware limitations:

input

mechanisms,

screen size and resolution,

and power consumption, to

name a few. One

of the

most important insights that

many designers have now

realized with regard

to

handheld

devices is that handhelds are

often not standalone

systems. They are, as in

the

case of personal information

managers like Palm and

Pocket PC devices,

satellites

of a

desktop system, used more to

view information than

perform heavy input on

their

own.

Although folding keyboards

can be purchased for many

handhelds, this,

inessence,

transforms them into desktop

systems (with tiny screens).

In the role of

satellite

devices, an auxiliary posture is

appropriate for the most

frequently used

handheld

applications -- typical PIM,

e-mail, and Web browsing

applications, for

example.

Less frequently or more

temporarily used handheld

applications (like

alarms)

can adopt a more transient

posture.

Cellular

telephones are an interesting type of

handheld device. Phones are

not satellite

devices:

they are primary communication

devices. However, from an

interface posture

standpoint,

phones are really transient. You

place a call as quickly as

possible and

then

abandon the interface to

your conversation. The best

interface for a phone

is

arguably

non-visual. Voice activation is

perfect for placing a call;

opening the flip

lid

on a

phone is probably the most

effective way of answering it

(or again using

voice

activation

for hands-free use). The

more transient the phones

interface is, the

better.

In the

last couple of years, handheld data

devices and handheld phones

have been converging. These

convergence devices run

the

risk of

making phone operation too

complex and data

manipulation too difficult,

but the latest breed of

devices like the

Handspring

Treo has delivered a successful

middle ground. In some ways,

they have made the

phone itself more usable

by

allowing

the satellite nature of the

device to aid in the input

of information to the phone: Treos make

use of desktop contact

information to

synchronize the device's phonebook,

for example, thus removing

the previously painful data entry

step and

reinforcing

the transient posture of the

phone functionality. It is important,

when designing for these

devices, to recognize

the

auxiliary

nature of data functions and

the transient nature of

phone functions, using each to reinforce

the utility of the other.

(The

data

dialup should be minimally transient,

whereas the data browsing

should be auxiliary

247

Human

Computer Interaction

(CS408)

VU

Appliances

Most

appliances have extremely

simple displays and rely

heavily on hardware

buttons

and

dials to manipulate the

state of the appliance. In

some cases, however,

major

appliances

(notably washers and dryers)

will sport color LCD touch

screens allowing

rich

output and direct

input.

Appliance

interfaces, like the phone

interfaces mentioned in the

previous section,

should

primarily be considered transient

posture interfaces. Users of

these interfaces

will

seldom be technology-savvy and

should, therefore, be presented the most

simple

and

straightforward interfaces possible.

These users are also

accustomed to hardware

controls.

Unless an unprecedented ease of

use can be achieved with a

touch screen,

dials

and buttons (with

appropriate audible feedback,

and visual feedback via a

view-

only

display or even hardware

lamps) may be a better

choice. Many appliance

makers

make

the mistake of putting

dozens of new -- and

unwanted -- features into

their

new,

digital models. Instead of

making it easier, that "simple"

LCD touchscreen

becomes a

confusing array of unworkable

controls.

Another

reason for a transient

stance in appliance interfaces is

that users of

appliances

are

trying to get something very

specific done. Like the

users of transactional

kiosks,

they

are not interested in

exploring the interface or

getting additional

information;

they

simply want to put the

washer on normal cycle or cook

their frozen dinners.

One

aspect of appliance design

demands a different posture:

Status information

indicating

what cycle the washer is on or

what the VCR is set to

record should be

provided

as a daemonic icon, providing

minimal status quietly in a

corner. If more

than

minimal status is required, an

auxiliary posture for this

information then

becomes

appropriate.

Flow and

Transparency

27.4

When

people are able to

concentrate wholeheartedly on an

activity, they lose

awareness

of peripheral problems and

distractions. The state is

called flow, a

concept

first

identified by Mihaly Csikszentmihalyi,

professor of psychology at the

University

of

Chicago, and author of Flow:

The Psychology of Optimal

Experience

(HarperCollins,

1991).

In

Peopleware: Productive Projects

and Teams (Dorset House,

1987), Tom DeMarco

and

Timothy Lister, describe flow as a

"condition of deep, nearly

meditative

involvement."

Flow often induces a "gentle

sense of euphoria" and can

make you

unaware

of the passage of time. Most

significantly, a person in a state of

flow can be

extremely

productive, especially when engaged in

process-oriented tasks such

as

"engineering,

design, development, and

writing." Today, these tasks

are typically

performed

on computers while interacting

with software. Therefore, it

behooves us to

create a

software interaction that

promotes and enhances flow,

rather than one

that

includes

potentially flow-breaking or

flow-disturbing behavior. If the

program

consistently

rattles the user out of

flow, it becomes difficult

for him to regain

that

productive

state.

If the

user could achieve his

goals magically, without

your program, he would. By

the

same

token, if the user needed

the program but could

achieve his goals without

going

through

its user interface, he

would. Interacting with

software is not an aesthetic

experience

(except perhaps in games, entertainment,

and exploration-oriented

248

Human

Computer Interaction

(CS408)

VU

interactive

systems). For the most part,

it is a pragmatic exercise that is best

kept to a

minimum.

Directing

your attention to the

interaction itself puts the

emphasis on the side

effects

of the

tools rather than on the

user's goals. A user

interface is an artifact, not

directly

related

to the goals of the user.

Next time you find

yourself crowing about what

cool

interaction

you've designed, just remember

that the ultimate user

interface for most

purposes is no

interface at all.

To create

flow, our interaction with

software must become transparent. In

other

words,

the interface must not call

attention to itself as a visual

artifact, but must

instead,

at every turn, be at the

service of the user,

providing what he needs at

the

right

time and in the right

place. There are several

excellent ways to make

our

interfaces

recede into invisibility.

They are:

1. Follow

mental models.

2.

Direct, don't

discuss.

3. Keep

tools close at hand.

4.

Provide modeless

feedback.

We will

now discuss each of these

methods in detail.

Follow

mental models

We

introduced the concept of

user mental models in

previous lectures. Different

users

will have

different mental models of a

process, but they will

rarely visualize them

in

terms of

the detailed innards of the

computer process. Each user

naturally forms a

mental

image about how the

software performs its task.

The mind looks for

some

pattern

of cause and effect to gain

insight into the machine's

behavior.

For

example, in a hospital information

system, the physicians and

nurses have a

mental

model of patient information

that derives from the

patient records that they

are

used to

manipulating in the real

world. It therefore makes most

sense to find patient

information

by using names of patients as an

index. Each physician has

certain

patients,

so it makes additional sense to

filter the patients in the

clinical interface so

that

each physician can choose

from a list of her own

patients, organized

alphabetically

by name. On the other hand, in

the business office of the

hospital, the

clerks

there are worried about

overdue bills. They don't

initially think about these

bills

in terms of

who or what the bill is

for, but rather in terms of

how late the bill is

(and

perhaps

how big the bill

is). Thus, for the

business office interface, it

makes sense to

sort

first by time overdue and

perhaps by amount due, with

patient names as a

secondary

organizational principle.

Direct

don't discuss

Many

developers imagine the ideal

interface to be a two-way conversation

with the

user.

However, most users don't

see it that way. Most

users would rather interact

with

the

software in the same way

they interact with, say,

their cars. They open

the door

and

get in when they want to go

somewhere. They step on the

accelerator when they

want

the car to move forward and

the brake when it is time to

stop; they turn

the

wheel

when they want the

car to turn.

This

ideal interaction is not a

dialog -- it's more like

using a tool. When a

carpenter

hits

nails, he doesn't discuss the

nail with the hammer; he

directs the hammer onto

the

nail. In

a car, the driver -- the

user -- gives the car

direction when he wants

to

change

the car's behavior. The

driver expects direct feedback

from the car and

its

249

Human

Computer Interaction

(CS408)

VU

environment

in terms appropriate to the device:

the view out the

windshield, the

readings

on the various gauges on the

dashboard, the sound of rushing

air and tires on

pavement,

the feel of lateral g-forces

and vibration from the

road. The carpenter

expects

similar feedback: the feel

of the nail sinking, the

sound of the steel

striking

steel,

and the heft of the

hammer's weight.

The

driver certainly doesn't expect

the car to interrogate him

with a dialog box.

One of

the reasons software often

aggravates users is that it doesn't act

like a car or a

hammer.

Instead, it has the temerity

to try to engage us in a dialog -- to

inform us of

our

shortcomings and to demand

answers from us. From

the user's point of view,

the

roles are

reversed: It should be the

user doing the demanding

and the software

doing

the

answering.

With

direct manipulation, we can

point to what we want. If we

want to move an

object

from A to B, we click on it and

drag it there. As a general

rule, the better,

more

flow-inducing

interfaces are those with

plentiful and sophisticated

direct manipulation

idioms.

Keep

tools close at hand

Most

programs are too complex for

one mode of direct

manipulation to cover all

their

features.

Consequently, most programs offer a

set of different tools to

the user. These

tools are

really different modes of

behavior that the program

enters. Offering tools is a

compromise

with complexity, but we can

still do a lot to make tool

manipulation easy

and to

prevent it from disturbing

flow. Mainly, we must ensure

that tool information

is

plentiful and easy to see

and attempt to make

transitions between tools

quick and

simple.

-Tools should be close at hand,

preferably on palettes or toolbars.

This way,

the

user can see them

easily and can select them

with a single click. If the

user must

divert

his attention from the

application to search out a

tool, his concentration will

be

broken.

It's as if he had to get up

from his desk and wander

down the hall to find

a

pencil.

He should never have to put

tools away manually.

Modeless

feedback

As we

manipulate tools, it's

usually desirable for the

program to report on their

status,

and on

the status of the data we are

manipulating with the tool.

This information

needs to

be clearly posted and easy to

see without obscuring or

stopping the action.

When

the program has information

or feedback for the user, it

has several ways to

present

it. The most common method

is to pop up a dialog box on

the screen. This

technique

is modal: It puts the program

into a mode that must be

dealt with before it

can

return to its normal state,

and before the user

can continue with her

task. A better

way to

inform the user is with

modeless feedback.

Feedback

is modeless whenever information

for the user is built

into the main

interface

and doesn't stop the normal

flow of system activities

and interaction. In

Word,

you can see what page

you are on, what section

you are in, how

many pages

are in

the current document, what

position the cursor is in,

and what time it is

modelessly

just by looking at the

status bar at the bottom of

the screen.

If you

want to know how many

words are in your document,

however, you have to

call up

the Word Count dialog

from the Tools menu.

For people writing

magazine

articles,

who need to be careful about

word count, this information

would be better

delivered

modelessly.

250

Human

Computer Interaction

(CS408)

VU

Jet

fighters have a heads-up display, or

HUD, that superimposes the

readings of

critical

instrumentation onto the

forward view of the

cockpit's windscreen. The

pilot

doesn't

even have to use peripheral

vision, but can read

vital gauges while keeping

his

eyes

glued on the opposing

fighter.

Our

software should display

information like a jet

fighter's HUD. The program

could

use

the edges of the display

screen to show the user

information about activity in

the

main

work area of applications.

Many drawing applications,

such as Adobe

Photoshop,

already provide ruler

guides, thumbnail maps, and

other modeless

feedback

in the periphery of their

windows.

Orchestration

27.5

When a

novelist writes well, the

craft of the writer becomes

invisible, and the

reader

sees

the story and characters

with clarity undisturbed by

the technique of the

writer.

Likewise,

when a program interacts

well with a user, the

interaction mechanics

precipitate

out, leaving the user

face-to-face with his

objectives, unaware of

the

intervening

software. The poor writer is

a visible writer, and a poor

interaction

designer

looms with a clumsily

visible presence in his

software.

To a

novelist, there is no such

thing as a "good" sentence.

There are no rules for

the

way

sentences should be constructed to be

transparent. It all depends on what

the

protagonist

is doing, or the effect the

author wants to create. The writer

knows not to

insert an

obscure word in a particularly quiet

and sensitive passage, lest

it sound like a

sour note

in a string quartet. The

same goes for software.

The interaction

designer

must

train his ears to hear sour notes in

the orchestration of software

interaction. It is

vital

that all the elements in an

interface work coherently

together towards a

single

goal.

When a program's communication

with the user is well

orchestrated, it becomes

almost

invisible.

Webster

defines orchestration as "harmonious

organization," a reasonable phrase

for

what we

should expect from

interacting with software.

Harmonious organization

doesn't

yield to fixed rules. You

can't create guidelines like,

"Five buttons on a

dialog

box

are good" and "Seven

buttons on a dialog box are

too many." Yet it is easy to

see

that a

dialog box with 35 buttons

is probably to be avoided. The

major difficulty with

such

analysis is that it treats the

problem in vitro. It doesn't take

into account the

problem

being solved; it doesn't take

into account what the

user is doing at the time

or

what he

is trying to accomplish.

Adding

finesse: Less is more

For

many things, more is better.

In the world of interface

design, the contrary is

true,

and we

should constantly strive to reduce

the number of elements in the

interface

without

reducing the power of the

system. In order to do this, we must do

more with

less;

this is where careful

orchestration becomes important. We must

coordinate and

control

all the power of the

product without letting the

interface become a gaggle of

windows

and dialogs, covered with a

scattering of unrelated and

rarely used controls.

It's

easy to create interfaces that are

complex but not very

powerful. They

typically

allow

the user to perform a single

task without providing access to

related tasks. For

example,

most desktop software allows

the user to name and

save a data file, but

they

never

let him delete, rename, or

make a copy of that file at

the same time. The

dialog

leaves

that task to the operating

system. It may not be

trivial to add these

functions,

251

Human

Computer Interaction

(CS408)

VU

but

isn't it better that the

programmer perform the

non-trivial activities than

that the

user to

be forced to? Today, if the

user wants to do something simple,

like edit a copy

of a

file, he must go through a non-trivial

sequence of actions: going to

the desktop,

selecting

the file, requesting a copy

from the menu, changing

its name, and then

open-

ing

the new file. Why

not streamline this

interaction?

It's

not as difficult as it looks.

Orchestration doesn't mean bulldozing

your way

through

problems; it means finessing

them wherever possible.

Instead of adding the

File

Copy and Rename functions to

the File Open dialog

box of every

application,

why

not just discard the

File Open dialog box

from every application and

replace it

with

the shell program itself?

When the user wants to

open a file, the program

calls

the

shell, which conveniently

has all those collateral

file manipulation functions

built-

in,

and the user can

double-click on the desired

document. True, the

application's File

Open

dialog does show the

user a filtered view of

files (usually limited to

those

formats

recognized by the application),

but why not add

that functionality to the

shell

--filter

by type in addition to sort by

type?

Following

this logic, we can also dispense

with the Save As ... dialog,

which is really

the

logical inverse of the File

Open dialog. If every time

we invoked the Save As

...

function

from our application, it

wrote our file out to a

temporary directory

under

some

reasonable temporary name and then

transferred control to the

shell, we'd have

all

the shell tools at our

disposal to move things

around or rename them.

Yes,

there would be a chunk of code

that programmers would have

to create to make

it all

seamless, but look at the

upside. Countless dialog boxes

could be completely

discarded,

and the user interfaces of

thousands of programs would become

more

visually

and functionally consistent,

all with a single design

stroke. That is finesse!

Distinguishing

possibility from probability

There are

many cases where

interaction, usually in the

form of a dialog box, slips

into

a user

interface unnecessarily. A frequent

source for such clinkers is

when a program

is faced

with a choice. That's

because programmers tend to

resolve choices from

the

standpoint

of logic, and it carries over to

their software design. To a

logician, if a

proposition

is true 999,999 times out of

a million and false one

time, the proposition

is false

-- that's the way Boolean

logic works. However, to the

rest of us, the

proposition

is overwhelmingly true. The

proposition has a possibility of

being false,

but

the probability of it being

false is minuscule to the

point of irrelevancy. One of

the

most

potent methods for better

orchestrating your user

interfaces is segregating

the

possible

from the probable.

Programmers

tend to view possibilities as

being the same as

probabilities. For

example,

a user has the choice of

ending the program and

saving his work, or

ending

the

program and throwing away

the document he has been

working on for the last

six

hours.

Mathematically, either of these choices

is equally possible. Conversely,

the

probability

of the user discarding his

work is, at least, a thousand to

one against; yet

the

typical program always

includes a dialog box asking

the user if he wants to

save

his

changes.

The

dialog box is inappropriate

and unnecessary. How often

do you choose to

abandon

changes you make to a

document? This dialog is

tantamount to your

spouse

telling

you not to spill soup on

your shirt every time

you eat.

252

Human

Computer Interaction

(CS408)

VU

Providing

comparisons

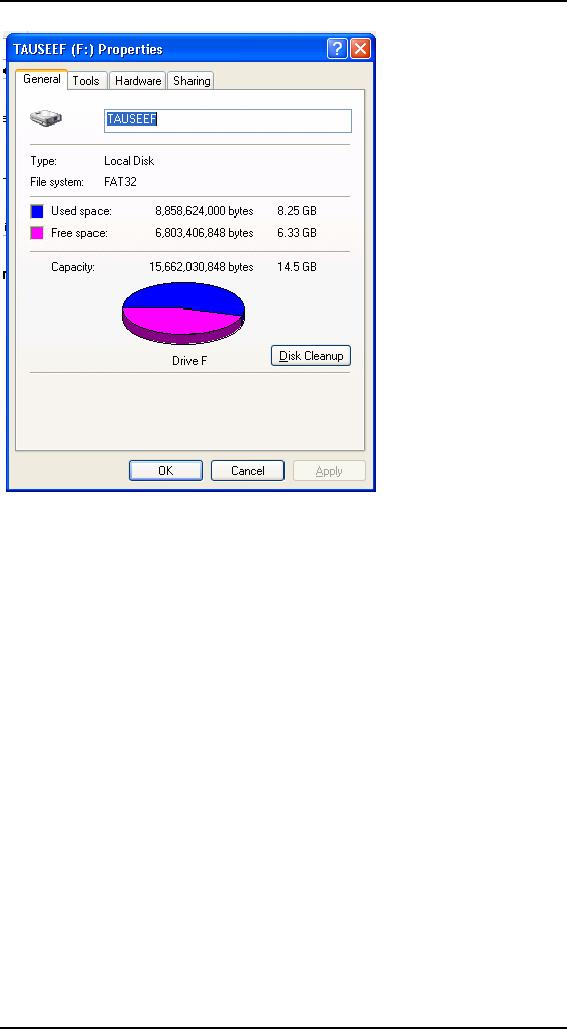

The

way that a program

represents information is another

way that it can

obtrude

noisily

into a user's consciousness.

One area frequently abused

is the representation of

quantitative,

or numeric, information. If an

application needs to show

the amount of

free

space on disk, it can do

what the Microsoft Windows

3.x File Manager

program

did:

give you the exact

number of free bytes.

In the

lower-left corner, the

program tells us the number

of free bytes and the

total

number of

bytes on the disk. These

numbers are hard to read and

hard to interpret.

With

more than ten thousand

million bytes of disk storage,

it-ceases to be important to

us just

how many hundreds are

left, yet the display

rigorously shows us down to

the

kilobyte.

But even while the

program is telling us the

state of our disk with

precision,

it is

failing to communicate. What we

really need to know is whether j or

not the disk

is

getting full, or whether we

can add a new 20 MB program

and still have

sufficient

working

room. These raw numbers, precise as

they are, do little to help

make sense of

the

facts.

Visual

presentation expert Edward

Tufte says that quantitative

presentation should

answer

the question, "Compared to

what?" Knowing that 231,728

KB are free on your

hard

disk is less useful than

knowing that it is 22 percent of

the disk's total

capacity.

Another

Tufte dictum is, "Show

the data," rather than

simply telling about it

textually

or

numerically. A pie chart

showing the used and unused

portions in different

colors

would

make it much easier to

comprehend the scale and

proportion of hard disk use.

It

would

show us what 231,728 KB

really means. The numbers

shouldn't go away,

but

they

should be relegated to the

status of labels on the

display and not be the

display

itself.

They should also be shown

with more reasonable and consistent

precision. The

meaning

of the information could be

shown visually, and the

numbers would merely

add

support.

In

Windows XP, Microsoft's

right hand giveth while

its left hand taketh

away. The

Pile

Manager is long dead, replaced by

the Explorer dialog box

shown in Figure.

253

Human

Computer Interaction

(CS408)

VU

This

replacement is the properties

dialog associated with a

hard disk. The Used

Space

is shown

in blue and the Free Space

is shown in magenta, making

the pie chart an

easy

read. Now you can

see at a glance the glad

news that GranFromage is

mostly

empty.

Unfortunately,

that pie chart isn't

built into the Explorer's

interface. Instead, you

have

to seek

it out with a menu item. To

see how full a disk

is, you must bring up a

modal

dialog

box that, although it gives

you the information, takes

you away from the

place

where

you need to know it. The

Explorer is where you can

see, copy, move,

and

delete

files; but it's not

where you can easily

see if things need to be deleted.

That pie

chart

should have been built into

the face of the Explorer. In

Windows 2000, it is

shown on

the left-hand side when

you select a disk in an Explorer

window. In XP,

however,

Microsoft took a step

backwards, and the graphic

has once again been

relegated

to a dialog. It really should be

visible at all times in the

Explorer, along with

the

numerical data, unless the

user chooses to hide

it.

Using

graphical input

Software

frequently fails to present numerical

information in a graphical way.

Even

rarer is

the capability of software to

enable graphical input. A

lot of software lets

users

enter numbers; then, on

command, it converts those numbers into a

graph. Few

products

let the user enter a

graph and, on command,

convert that graph into a

vector

of

numbers. By contrast, most modern

word processors let you

set tabs and

indentations

by dragging a marker on a ruler.

The user can say, in effect,

"Here is

254

Human

Computer Interaction

(CS408)

VU

where I

want the paragraph to

start," and let the

program calculate that it is

precisely

1.347

inches in from the left

margin instead of forcing

the user to enter

1.347.

"Intelligent"

drawing programs like

Microsoft Visio are getting

better at this. Each

polygon

that the user manipulates on

screen is represented behind the

scenes by a

small

spreadsheet, with a row for

each point and a column

each for the X and

Y

coordinates.

Dragging a polygon's vertex on

screen causes the values in

the

corresponding

point in the spreadsheet represented by

the X and Y values, to

change.

The

user can access the

shape either graphically or

through its

spreadsheet

representation.

This

principle applies in a variety of

situations. When items in a

list need to be

reordered,

the user may want

them ordered alphabetically,

but he may also want

them

in order

of personal preference; something no

algorithm can offer. The

user should be

able to

drag the items into

the desired order directly,

without an algorithm

interfering

with

this fundamental

operation.

Reflecting

program status

When

someone is asleep, he usually looks

asleep. When someone is awake, he

looks

awake.

When someone is busy, he

looks busy: His eyes

are focused on his work

and

his

body language is closed and

preoccupied. When someone is

unoccupied, he looks

unoccupied:

His body is open and

moving, his eyes are

questing and willing to

make

contact.

People not only expect

this kind of subtle feedback

from each other,

they

depend on

it for maintaining social

order.

Our

programs should work the

same way. When a program is

asleep, it should look

asleep.

When a program is awake, it

should look awake; and

when it's busy, it

should

look

busy. When the computer is

engaged in some significant internal

action like

formatting

a diskette, we should see

some significant external

action, such as the

icon

of the

diskette slowly changing

from grayed to active state.

When the computer is

sending a

fax, we should see a small

representation of the fax

being scanned and

sent

(or at

least a modeless progress bar). If the

program is waiting for a

response from a

remote

database, it should visually change to

reflect its somnolent state.

Program state

is best

communicated using forms of

rich modeless

feedback.

Avoiding

unnecessary reporting

For

programmers, it is important to know

exactly what is happening process-wise in

a

program.

This goes along with

being able to control all

the details of the process.

For

users, it

is disconcerting to know all

the details of what is

happening. Non-technical

people

may be alarmed to hear that

the database has been

modified, for example. It

is

better

for the program to just do

what has to be done, issue

reassuring clues when all

is well,

and not burden the

user with the trivia of

how it was

accomplished.

Many

programs are quick to keep

users apprised of the

details of their progress

even

though

the user has no idea

what to make of this

information. Programs pop up

dialog

boxes

telling us that connections

have been made, that records have been

posted, that

users

have logged on, that

transactions were recorded, that data

have been transferred,

and

other useless factoids. To

software engineers, these

messages are equivalent to

the

humming

of the machinery, the

babbling of the brook, the

white noise of the

waves

crashing

on the beach: They tell us

that all is well. They

were, in fact, probably

used

while

debugging the software. To

the user, however, these

reports can be like

eerie

lights

beyond the horizon, like

screams in the night, like

unattended objects

flying

about

the room.

255

Human

Computer Interaction

(CS408)

VU

As

discussed before, the

program should make clear

that it is working hard, but

the

detailed

feedback can be offered in a

more subtle way. In

particular, reporting

information

like this with a modal

dialog box brings the

interaction to a stop for no

particular

benefit.

It is

important that we not stop

the proceedings to report

normalcy. When some

event

has

transpired that was supposed

to have transpired, never

report this fact with

a

dialog

box. Save dialogs for events

that are outside of the

normal course of

events.

By the

same token, don't stop the

proceedings and bother the

user with problems

that

are not

serious. If the program is having

trouble getting through a

busy signal, don't

put up a

dialog box to report it.

Instead, build a status

indicator into the program

so

the

problem is clear to the

interested user but is not

obtrusive to the user who is

busy

elsewhere.

The

key to orchestrating the

user interaction is to take a

goal-directed approach.

You

must ask

yourself whether a particular

interaction moves the user

rapidly and directly

to his

goal. Contemporary programs

are often reluctant to take

any forward motion

without

the user directing it in

advance. But users would

rather see the program

take

some

"good enough" first step

and then adjust it to what

is desired. This way,

the

program

has moved the user

closer to his goal.

Avoiding

blank slates

It's

easy to assume nothing about

what your users want

and rather ask a bunch

of

questions

of the user up front to help

determine what they want.

How many programs

have

you seen that start with a

big dialog asking a bunch of

questions? But users --

not

power users, but normal

people -- are very

uncomfortable with explaining to

a

program

what they want. They

would much rather see

what the program thinks

is

right

and then manipulate that to

make it exactly right. In most

cases, your program

can

make a fairly correct

assumption based on past

experience. For example,

when

you

create a new document in Microsoft

Word, the program creates a

blank document

with

preset margins and other

attributes rather than

opening a dialog that asks

you to

specify

every detail. PowerPoint

does a less adequate job,

asking you to choose

the

base

style for a new presentation

each time you create one.

Both programs could

do

better by

remembering frequently and

recently used styles or

templates, and making

those the

defaults for new

documents.

Just

because we use the word

think in conjunction with a

program doesn't mean that

the

software needs to be intelligent

(in the human sense)

and try to determine the

right

thing to

do by reasoning. Instead, it should

simply do something that has

a statistically

good

chance of being correct,

then provide the user

with powerful tools for

shaping

that

first attempt, instead of

merely giving the user a

blank slate and challenging

him

to have

at it. This way the

program isn't asking for

permission to act, but rather

asking

for

forgiveness after the

fact.

For most

people, a completely blank slate is a

difficult starting point.

It's so much

easier to

begin where someone has

already left off. A user

can easily fine-tune

an

approximation

provided by the program into

precisely what he desires

with less risk

of

exposure and mental effort

than he would have from

drafting it from

nothing.

Command

invocation versus

configuration

Another

problem crops up quite frequently,

whenever functions with

many

parameters

are invoked by users. The

problem comes from the

lack of differentiation

between a

function and the

configuration of that function. If

you ask a program to

256

Human

Computer Interaction

(CS408)

VU

perform a

function itself, the program

should simply perform that

function and not

interrogate

you about your precise

configuration details. To express precise

demands

to the

program, you would request

the configuration dialog.

For example, when

you

ask

many programs to print a

document, they respond by

launching a complex

dialog

box

demanding that you specify

how many copies to print,

what the paper

orientation

is,

what paper feeder to use,

what margins to set, whether

the output should be

in

monochrome

or color, what scale to

print it at, whether to use

Postscript fonts or

native

fonts, whether to print the

current page, the current

selection, or the

entire

document,

and whether to print to a

file and if so, how to

name that file. All

those

options

are useful, but all we

wanted was to print the

document, and that is all

we

thought

we asked for.

A much

more reasonable design would be to

have a command to print and

another

command

for print setup. The print

command would not issue

any dialog, but

would

just go

ahead and print, either

using previous settings or standard,

vanilla settings. The

print

setup function would offer up

all those choices about paper

and copies and fonts.

It would

also be very reasonable to be able to go

directly from the configure

dialog to

printing.

The

print control on the Word

toolbar offers immediate

printing without a dialog

box.

This is

perfect for many users,

but for those with

multiple printers or printers on

a

network,

it may offer too little

information. The user may

want to see which printer

is

selected

before he either clicks the

control or summons the

dialog to change it first.

This is a

good candidate for some

simple modeless output

placed on a toolbar or

status

bar (it is currently

provided in the ToolTip for

the control, which is good,

but

the

feedback could be better

still). Word's print setup

dialog is called Print. . .

and is

available

from the File menu.

Its name could be clearer,

although the ellipsis

does,

according

to GUI standards, give some inkling

that it will launch a

dialog.

There is

a big difference between

configuring and invoking a

function. The former

may

include the latter, but

the latter shouldn't include

the former. In general, any

user

invokes a

command ten times for

every one time he configures

it. It is better to

make

the

user ask explicitly for

configuration one time in

ten than it is to make the

user

reject

the configuration interface

nine times in ten.

Microsoft's

printing solution is a reasonable rule of

thumb. Put immediate access

to

functions

on buttons in the toolbar

and put access to

function-configuration dialog

boxes on

menu items. The

configuration dialogs are

better pedagogic tools,

whereas

the

buttons provide immediate

action.

Asking

questions versus providing

choices

Asking

questions is quite different

from providing choices. The

difference between

them is

the same as that between

browsing in a store and conducting a

job interview.

The

individual asking the

questions is understood to be in a

position superior to

the

individual

being asked. Those with authority

ask questions; subordinates

respond.

Asking

users questions makes them

feel inferior.

Dialog

boxes (confirmation dialogs in

particular) ask questions.

Toolbars offer

choices.

The confirmation dialog

stops the proceedings, demands an answer,

and it

won't

leave until it gets what it

wants Toolbars, on the other

hand, are always

there,

quietly

and politely offering up

their wares like a

well-appointed store, offering

you

the

luxury of selecting what you

would like with just a

flick of your finger.

Contrary

to what many software

developers think, questions

and choices don't

necessarily

make the user feel

empowered. More commonly, it

makes the user

feel

257

Human

Computer Interaction

(CS408)

VU

badgered

and harassed. Would you

like soup or salad? Salad.

Would you like

cabbage

or spinach?

Spinach. Would you like

French, Thousand Island, or

Italian? French.

Would

you like lo-cal or regular?

Stop! Just bring me the

soup! Would you

like

chowder

or chicken noodle?

Users

don't like to be asked questions. It

cues the user that

the program is:

· Ignorant

· Forgetful

· Weak

· Lacking

initiative

· Unable

to fend for itself

· Fretful

· Overly

demanding

These are

qualities that we typically

dislike in people. Why

should we desire them in

software?

The program is not asking us

our opinion out of

intellectual curiosity or

desire to

make conversation, the way a

friend might over dinner.

Rather, it is

behaving

ignorantly or presenting itself

with false authority. The

program isn't

interested

in our opinions; it requires

information -- often information it

didn't really

need to

ask us in the first.

Worse

than single questions are

questions that are asked

repeatedly and

unnecessarily.

Do you

want to save that file? Do

you want to save that

file now? Do you really

want

to save

that file? Software that

asks fewer questions appears

smarter to the user,

and

more

polite, because if users

fail to know the answer to a

question, they then

feel

stupid.

In The

Media Equation (Cambridge

University Press, 1996),

Stanford sociologists

Clifford

Nass and Byron Reeves

make a compelling case that

humans treat and

respond

to computers and other interactive

products as if they were

people. We should

thus

pay real attention to the

"personality" projected by our

software. Is it quietly

competent

and helpful, or does it

whine, nag, badger, and

make excuses?

Choices

are important, but there is

a difference between being

free to make choices

based on

presented information and being

interrogated by the program in

modal

fashion.

Users would much rather

direct their software the

way they direct

their

automobiles

down the street. An automobile

offers the user

sophisticated choices

without

once issuing a dialog

box.

Hiding

ejector seat levers

In the

cockpit of every jet fighter

is a brightly painted lever

that, when pulled, fires

a

small

rocket engine underneath the

pilot's seat, blowing the

pilot, still in his seat,

out

of the

aircraft to parachute safely to

earth. Ejector seat levers

can only be used

once,

and

their consequences are

significant and

irreversible.

Just

like a jet fighter needs an

ejector seat lever, complex

desktop applications need

configuration

facilities. The vagaries of

business and the demands

placed on the

software

force it to adapt to specific

situations, and it had

better be able to do

so.

Companies

that pay millions of dollars

for custom software or site licenses

for

thousands of copies of

shrink-wrapped products will not

take kindly to a

program's

inability

to adapt to the way things

are done in that particular

company. The program

must

adapt, but such adaptation

can be considered a one-time

procedure, or something

258

Human

Computer Interaction

(CS408)

VU

done

only by the corporate IT

staff on rare occasion. In other words,

ejector seat

levers

may need to be used, but

they won't be used very

often.

Programs must

have ejector seat levers so

that users can --

occasionally -- move

persistent

objects in the interface, or

dramatically (sometimes irreversibly)

alter the

function

or behavior of the application.

The one thing that must

never happen is

accidental

deployment of the ejector

seat. The interface design

must assure that the

user

can never inadvertently fire

the ejector seat when

all he wants to do is make

some

minor

adjustment to the

program.

Ejector

seat levers come in two basic

varieties: those that cause

a significant visual

dislocation

(large changes in the layout

of tools and work areas) in

the program, and

those

that perform some

irreversible action. Both of

these functions should be

hidden

from

inexperienced users. Of the

two, the latter variety is

by far the more

dangerous.

In the

former, the user may be

surprised and dismayed at

what happens next, but

he

can at

least back out of it with

some work. In the latter

case, he and his colleagues

are

likely to

be stuck with the

consequences.

259

Table of Contents:

- RIDDLES FOR THE INFORMATION AGE, ROLE OF HCI

- DEFINITION OF HCI, REASONS OF NON-BRIGHT ASPECTS, SOFTWARE APARTHEID

- AN INDUSTRY IN DENIAL, SUCCESS CRITERIA IN THE NEW ECONOMY

- GOALS & EVOLUTION OF HUMAN COMPUTER INTERACTION

- DISCIPLINE OF HUMAN COMPUTER INTERACTION

- COGNITIVE FRAMEWORKS: MODES OF COGNITION, HUMAN PROCESSOR MODEL, GOMS

- HUMAN INPUT-OUTPUT CHANNELS, VISUAL PERCEPTION

- COLOR THEORY, STEREOPSIS, READING, HEARING, TOUCH, MOVEMENT

- COGNITIVE PROCESS: ATTENTION, MEMORY, REVISED MEMORY MODEL

- COGNITIVE PROCESSES: LEARNING, READING, SPEAKING, LISTENING, PROBLEM SOLVING, PLANNING, REASONING, DECISION-MAKING

- THE PSYCHOLOGY OF ACTIONS: MENTAL MODEL, ERRORS

- DESIGN PRINCIPLES:

- THE COMPUTER: INPUT DEVICES, TEXT ENTRY DEVICES, POSITIONING, POINTING AND DRAWING

- INTERACTION: THE TERMS OF INTERACTION, DONALD NORMANS MODEL

- INTERACTION PARADIGMS: THE WIMP INTERFACES, INTERACTION PARADIGMS

- HCI PROCESS AND MODELS

- HCI PROCESS AND METHODOLOGIES: LIFECYCLE MODELS IN HCI

- GOAL-DIRECTED DESIGN METHODOLOGIES: A PROCESS OVERVIEW, TYPES OF USERS

- USER RESEARCH: TYPES OF QUALITATIVE RESEARCH, ETHNOGRAPHIC INTERVIEWS

- USER-CENTERED APPROACH, ETHNOGRAPHY FRAMEWORK

- USER RESEARCH IN DEPTH

- USER MODELING: PERSONAS, GOALS, CONSTRUCTING PERSONAS

- REQUIREMENTS: NARRATIVE AS A DESIGN TOOL, ENVISIONING SOLUTIONS WITH PERSONA-BASED DESIGN

- FRAMEWORK AND REFINEMENTS: DEFINING THE INTERACTION FRAMEWORK, PROTOTYPING

- DESIGN SYNTHESIS: INTERACTION DESIGN PRINCIPLES, PATTERNS, IMPERATIVES

- BEHAVIOR & FORM: SOFTWARE POSTURE, POSTURES FOR THE DESKTOP

- POSTURES FOR THE WEB, WEB PORTALS, POSTURES FOR OTHER PLATFORMS, FLOW AND TRANSPARENCY, ORCHESTRATION

- BEHAVIOR & FORM: ELIMINATING EXCISE, NAVIGATION AND INFLECTION

- EVALUATION PARADIGMS AND TECHNIQUES

- DECIDE: A FRAMEWORK TO GUIDE EVALUATION

- EVALUATION

- EVALUATION: SCENE FROM A MALL, WEB NAVIGATION

- EVALUATION: TRY THE TRUNK TEST

- EVALUATION PART VI

- THE RELATIONSHIP BETWEEN EVALUATION AND USABILITY

- BEHAVIOR & FORM: UNDERSTANDING UNDO, TYPES AND VARIANTS, INCREMENTAL AND PROCEDURAL ACTIONS

- UNIFIED DOCUMENT MANAGEMENT, CREATING A MILESTONE COPY OF THE DOCUMENT

- DESIGNING LOOK AND FEEL, PRINCIPLES OF VISUAL INTERFACE DESIGN

- PRINCIPLES OF VISUAL INFORMATION DESIGN, USE OF TEXT AND COLOR IN VISUAL INTERFACES

- OBSERVING USER: WHAT AND WHEN HOW TO OBSERVE, DATA COLLECTION

- ASKING USERS: INTERVIEWS, QUESTIONNAIRES, WALKTHROUGHS

- COMMUNICATING USERS: ELIMINATING ERRORS, POSITIVE FEEDBACK, NOTIFYING AND CONFIRMING

- INFORMATION RETRIEVAL: AUDIBLE FEEDBACK, OTHER COMMUNICATION WITH USERS, IMPROVING DATA RETRIEVAL

- EMERGING PARADIGMS, ACCESSIBILITY

- WEARABLE COMPUTING, TANGIBLE BITS, ATTENTIVE ENVIRONMENTS